This post is going to be a doozy. I started out considering our core focus - I was writing a blog on the security implications of AI use in small to medium businesses. That alone is quite a serious topic, but the more I wrote, the more I found I needed to write. So today, we’re branching beyond our typical "security, connectivity and resilience" sweet spot. It’s time to dive into the tech industry’s favourite two letters...

I want to start with some quotes. I’ve often found myself needing to explain AI to non-technical people and have consistently landed on this concept:

"AI is like an overly enthusiastic graduate, it often lacks context, it will make errors, but boy will it work fast".

Recently, an industry peer brought the whole idea to life with this one:

"AI is like power tools. In the hands of a skilled tradesman it will let them work faster with less fatigue. In the hands of someone who has never swung a hammer it could accidentally remove a finger."

Both of these analogies land on the same kernel of truth, that AI isn't a replacement for your knowledge and your judgement - not yet at least. Rather it's an enhancement

Both of these analogies land on the same kernel of truth: AI isn't a replacement for your knowledge and your judgement - not yet, at least. Rather, it’s an enhancement. Now, when I say "AI," I’m explicitly referring to Generative AI or LLMs (Large Language Models). Think ChatGPT or Gemini. I’m not referring to Machine Learning, Computer Vision, Robotic Process Automation, or any of the myriad other forms of AI.

AI everywhere (Even Where it Doesn't Belong)

At the moment, it doesn't matter where you look; there’s AI either giving rise to new product categories or being bolted into places that don't make much sense.

- Microsoft Notepad or Paint with Copilot bolted on.

- A toothbrush with AI built in.

- Apple's "Apple Intelligence" that hallucinated news headlines.

- Or things like the AI Pins that just didn't survive the common sense test.

Then there are places where it perhaps does make sense, but too often it doesn't feel considered. I am, of course, talking about cloud software platforms. I can't think of a single one that hasn't added some sort of AI feature in the last 24 months. The problem I have is that in many instances, it feels like AI was added because of shareholder expectations, not because it was an obvious natural fit.

I’m setting this scene for one reason and one reason only: unless you’re managing to entirely avoid modern technology, modern software, and modern cloud services - you’re already using AI, maybe even unknowingly. And THIS is our segue into the next topic...

Where's my data

Or the hidden cost of "free".

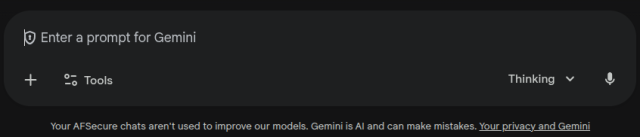

This is where the first version of this blog post was trying to live. AI is the living embodiment of the saying, "If you aren't paying for the product, you are the product." It’s an important distinction. When I use Gemini, for example, my chats all have the same footer at the bottom. One of the things it says is: "Your AFSecure chats aren't used to improve our models."

We use the business version of Gemini as our primary AI tool.

This is because if I was on the free/consumer tier of the product, everything I type would be getting sucked into the model i or at least could be. In short, I wouldn't be a user of the AI platform; I would be feeding it. It would be retaining my data to help it grow. This becomes a huge compliance burden for a shockingly long list of industries in Australia, especially with the 10 December 2026 transparency laws for rental/property applications looming.

The list of sectors that should prioritise using paid, enterprise-grade AI tiers to ensure data is not used for model training includes:

- Financial Services (Banking, Lending, Capital Markets)

- Health Services (Medical Diagnostics, Patient Records)

- Law Firms and Corporate Legal

- Consultancies and Professional Services

- Real Estate and Housing

- Human Resources and Recruitment

- Education (handling student records and minor data)

- Public Sector and Government (Essential Services and Public Records)

- Insurance and Actuarial Services

- Media (Intellectual Property protection)

In an era where the OAIC is broadening their use of compliance sweeps, it's important to get ahead of the issue.

So, how do you manage this risk? The first and most obvious is to simply ensure you’re not getting sucked into "free" tiers. Interrogate your vendors: how are they using AI? Do they use your data as training data? What are your options to opt out? If you are using AI to great benefit, then it may be time to transition to a paid tier. Otherwise, some policies dictating what can and what can't be put into an AI prompt are required - though I'll be the first to admit this is very hard to police.

That enthusiastic grad

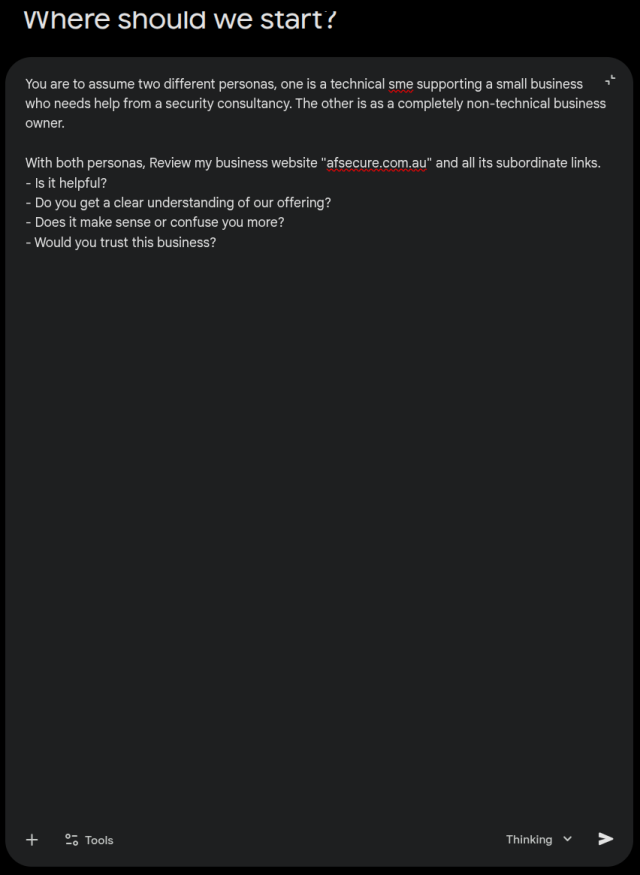

One of the simplest and lowest-risk uses for AI is to simply review your own website and business properties. Get it to "put on different hats" and review your webpage through the eyes of different customers. I’ve found these very helpful in the past to help me polish things up before I resort to the "wife test" (or partner test!). I want to stress, however, that AI isn't a replacement for human feedback. It’s great at seeing inconsistencies, but nuance still largely needs a human touch.

To bring this to life, here’s what Gemini had to say about OUR website. And here’s the exact prompt I used to generate it:

AI also makes a fantastic spell checker and reviewer for consistency. I’m sure you can guess which posts I’ve written with AI performing a final review and which I haven't. The ones without tend to be littered with typos (sorry!) and the ones with an AI review get fixed. Again, it doesn't mean I copy-paste the "suggested AI version." It means I have a clear hit-list of errors to fix myself.

Finally, "Deep Research" modes are a genuine time-saving cheat code. My experience is that they’re a little indiscriminate in the sources they pick, so you must still fact-check everything. But AI’s ability to find 200 sources on a topic and consolidate the key points down to an A4 page is a huge win for a busy business owner.

Removing a finger

One of the big caveats to all of this is: don't use AI for everything! It shows. It looks sloppy.

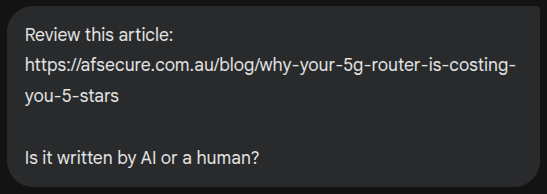

One of the great things about AI is that it’s also good at detecting AI. For a little bit of fun, I decided to do a side-by-side comparison:

Now before I continue any further. Please pop open a new tab. Follow the links above. Go and read those two articles back to back. If you've read enough AI generated content, one of them should be giving you that uneasy feeling of "A human didn't write this".

Just to be clear. There is now an AI disclaimer on the AI article. I didn't help the machine out, I added that disclaimer after the "AI or Human" report was written. That article is purposely hidden, but I wanted to avoid someone accidentally landing on it and thinking we're in the business of producing AI slop.

So I asked AI to review them for me.

You can see the full reports here: Human_author.pdf vs AI_author.pdf. Using AI everywhere cheapens your work. The very same tools that create "slop" can also detect it, and your customers will notice the lack of personality.

The "skilled tradesman"

Yes, we absolutely use AI tooling. Not using it would be like a tradesman refusing to use a power drill. It just doesn't make sense in a modern context. And I'm absolutely aware of the cognitive dissonance when we just put up this post recently. But that's the reality of the world we're in.

So how DO we use it?

- As a final reviewer: To catch those pesky typos.

- To research and validate: Finding counter-points to our own arguments to ensure we're giving balanced advice.

- To help structure thoughts: Sometimes I’ll dump a "brain-fart" of raw thoughts into Gemini and ask it to help me find the logical flow.

- Imagery: Generating specific visual assets that help explain technical concepts.

To give complete transparency, as I write this, I have one more section to go. Once I'm done I will pop this entire post into out AI and look for major spelling and formatting errors. Once that's done I'll come back and get it to generate an image that goes with the article. I won't ask it to "generate an image for this" I'll tell it exactly what I want - keep an eye out for Gary's Slop Shop.

The dangers & caveats

As we’ve explored, AI is a "power tool" that can either build your business faster or cause a significant injury if mishandled. For Australian SMBs, the risks generally fall into three categories:

- The Privacy Trap: Every time you paste a sensitive client detail or a proprietary business process into a free AI tool, you are potentially broadcasting that information to the world's largest training database. For those in regulated industries like health or finance, this isn't just a "whoops" - it’s a major compliance breach.

- The Hallucination Headache: AI is a "confident liar." It will cite laws that don't exist and invent statistics that sound plausible. If you use AI-generated content without a "human in the loop" to fact-check, you risk your reputation and potentially your professional indemnity.

- The Loss of "Five-Star" Trust: In an era where "AI Slop" is becoming the norm, your unique human voice is your most valuable asset. If your clients start to feel like they are talking to a bot rather than an expert, that hard-earned trust evaporates.

Our advice? Use the power tools, but keep your safety goggles on. Stick to paid, enterprise-grade versions of these tools whenever possible, never put sensitive data into a prompt, and always always give the final output a human review.